Blog: The Million Dollar Case Study Session #15: Amazon Split Testing Best Practices

The Million Dollar Case Study Session #15: Amazon Split Testing Best Practices

Welcome to the recap of Session #15 of the Million Dollar Case Study. This was an inspirational insight into the world of Amazon Split Testing, and how we can really optimize a private label product listing for increased profits. Joining Gen on the webinar is Andrew Browne from Splitly – the smartest tool for Amazon optimization using artificial intelligence and automation to build a fine-tuned and highly converting product listing.

If you want to try Splitly today, use the code ‘Million' for 25% off your first month!

We already covered finding the right keywords in Session #11 and Scott Voelker covered Amazon search engine optimization in the last Session. So how can we continue to make improvements from here?

The answer is with Split Testing, or A/B testing. Let's find out what that looks like for Amazon sellers…

Replay & Slides

As always, here's your full webinar replay:

Here's the slides from the webinar:

What is Split Testing & Why Do I Need It?

If you have been in the digital marketing world previously, you may already know the answer. For some Amazon sellers, Split Testing is an entirely new concept. Andrew starts by explaining Split Testing in simple terms:

Split Testing is where you have two different versions of something, and test which one is better, with a data-driven and controlled experiment.

In ecommerce this often means testing two different versions of a product page, to see which results in more conversions. It really is this simple on the surface level. We want to find out how changes to a product page can have an effect on the number of sales.

The complexity behind Split Testing is ensuring that this is tested in a reliable and controlled manner, and this is where software like Splitly comes in, to automate these scientific experiments. However, it is possible to run tests manually, if you have the time and inclination. We're going to cover both of these options in more detail in this recap.

Why do we want to Split Test on Amazon?

Andrew covers three core reasons why Split Testing is one of the smartest ways to get ahead as a seller today:

- Improve conversion rate – this has a compound effect, an increase in conversion rate will increase your sales and this increase in velocity will increase your rank, which will result in even more sales

- Get more visitors – likewise, an increase in CTR (click-through rate) and sessions (visitors to your page) can help to increase sales

- Price wisely – one of the biggest wins is to optimize your pricing consistently so that you are always one step ahead, which can have a huge positive impact on your bottom line

Why can't I just Split Test manually?

At this point you may be wondering if you can just change your price, or your lead image, for a few weeks, and then compare it to the previous version.

Andrew explains very carefully why doing this will not result in the most accurate results. To put it simply, Amazon has a lot of moving parts. This includes market conditions, seasonality, demand, competition and more. All of these things are consistently changing and as such, if you simply changed your lead image for two weeks and see an improvement in conversions, then you can't guarantee that it's because you changed your image.

For this reason, the best way to make solid business decisions when it comes to listing optimization is to automate with split testing, and test two versions of your listing concurrently. Though, it is possible to run concurrent tests manually, but it requires a very hands-on approach.

How Does Split Testing Work on Amazon?

Andrew explains that split testing works quite differently on Amazon compared to your own website. Mostly because you have less control over Amazon, compared to your own ecommerce store.

This is because Amazon gives traffic data on a 24 hour period, and sales velocity affects search rank on a 30 day rolling average.

This means two things:

- You must make changes in your split test every day at midnight (Pacific time for Amazon.com or Universal time for UK, etc.), for at least two weeks

- It's much easier to run a simple A/B test, where you just test two variants, rather than testing multiple variants of your listing

In the live webinar, Andrew walks through how you could change your pricing each day at midnight. This is quite laborious as you would need to set reminders to do it on time each day, and then pull your sales data from Seller Central and keep a record of that during the course of your split test experiment.

So at the end of your test, every even day will be variant B and every odd day will be variant A. At this point, you will see that one of your variants is doing better than the other. But how do you then determine that this wasn't just luck? Enter statistical significance.

Statistical Significance Explained

This is a mathematical function that helps to determine whether your result was luck or not. This essentially helps you to determine whether or not your split test results are reliable.

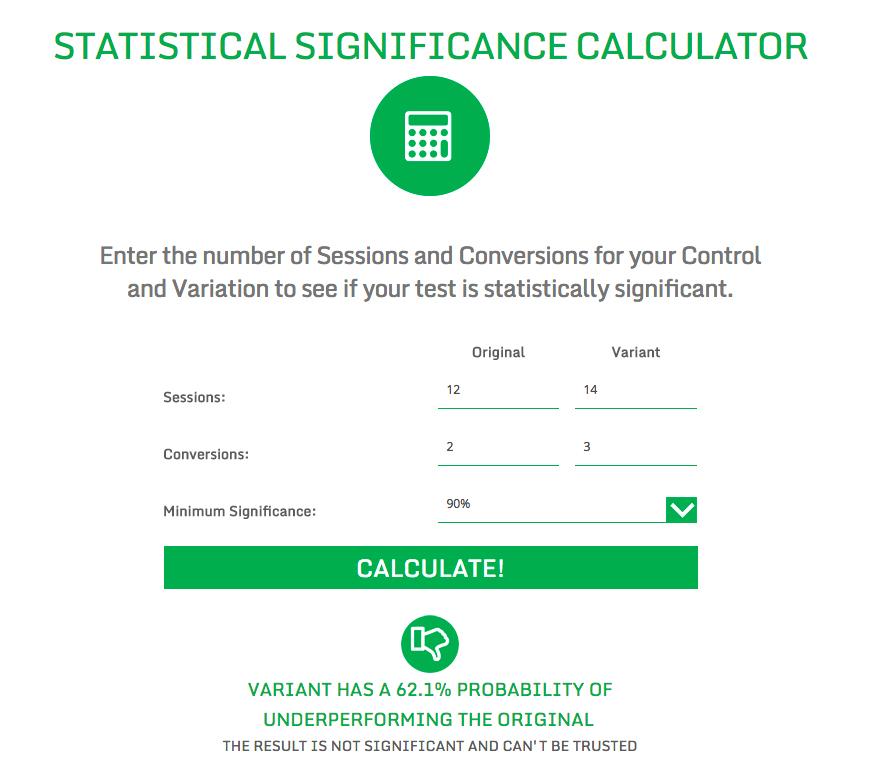

The easiest way to figure this out is to use an online statistical significance calculator. Here's the example Andrew shows using the Splitly calculator:

You simply have to take the following steps:

- Enter your sessions and conversions for both your original (Variant A) and Variant B

- Select your minimum significance – the industry standard is 90% but you could even set this higher

- Hit the calculate button and see your results

In the example above, the result is only 62%. This means there is not a high enough statistical significance to move forward with your “winning” variant in this case.

If your result is statistically significant, i.e. over 90%, then you should move forward with the winning variant.

However, if your result is not statistically significant, or under 90%, then you can continue with your test to gather more data points. Or, you can conclude that there is not much different between the two variants and try to test something else, or tweak your test.

When Should I Start Split Testing?

Andrew states that there is no rule of thumb when it comes to starting your split tests. It's more of a moving feast and should be a continuous improvement. Therefore you can start testing right away.

If you have launched a new product and have low sessions and sales, then you may need to run longer tests to find statistical significance.

The best products to start testing are your best sellers!

Additionally, the best places to start testing your Amazon listing are your price and your image. These are the most effective tests that Splitly users have been running since the tool was launched.

However, you can also test the following elements of an Amazon product listing:

- Title

- All images (including image order)

- Features (bullets)

- Product Description

The split test process overview

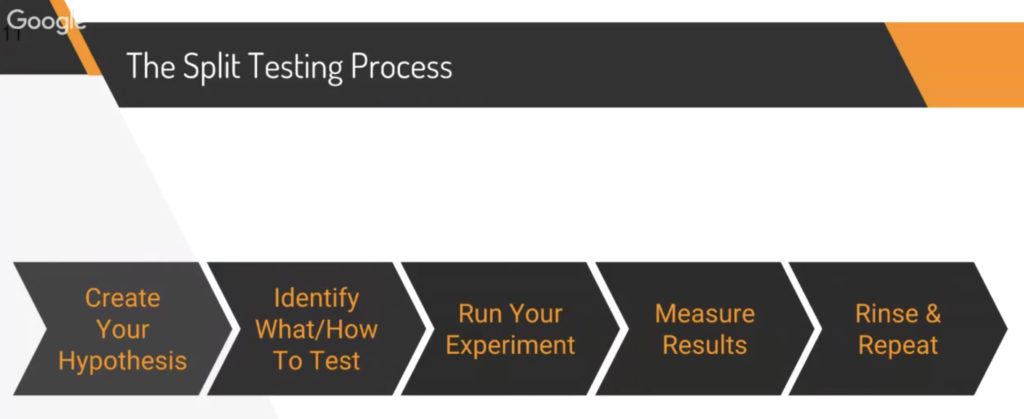

Andrew shared a really useful process which will help you to plan and successfully carry out an ongoing optimization funnel:

- Create your hypothesis – For example, you know that your main competitor has a flaw with their product and listing, which you have actively improved with your own product. You decide that you really want to focus on your listing content to highlight that point of difference in your product.

- Identify what to test – Figure out how to best to improve your listing to show off your product. This could be with the images, or in the product description. You may want to test more than one element so at this point you may even create a schedule to test multiple things over a period of a few months.

- Run your experiment – Whether manually or using software, get going with your first split test as soon as possible.

- Measure results – Similarly, whether you are manually pulling data from Seller Central, or relying on reporting from a tool like Splitly, you need to measure your results effectively to ensure you are making good decisions about which variants to stick with, and what tests to run next.

- Rinse & repeat – Even if you get a really positive result, adopt the winning variant, and increase sales – your work is not done. There are many things you can test and adapt as time moves on. Remember, market trends, seasonality and other factors change!

A Note On Conversion Rate

Gen and Andrew have a really interesting conversation in the webinar about the pitfalls of concentrating too heavily on conversion rates, rather that profits.

It's not always the case that a lower conversion rate means worse performance. For example, if you raise your price in a pricing test, you might see a drop in conversion rate, but you might be making more profit per day, due to the price increase. There's a really detailed explanation of Amazon conversion rate in this Splitly article.

Another important thing to remember is that Amazon rewards you for a higher sales velocity. So this is an extra thing to consider when you are looking at your split test results, as a higher sales velocity may have a positive compound effect over time.

Testing Product Titles & Keywords

One really easy way to test your keywords and their effectiveness is to test your product title. Andrew recommends you test your title sooner, rather than later, in your product's lifecycle.

At the moment, there is a lot of talk about shorter Amazon titles being more effective. But as reiterated throughout Andrew's presentation, the only true way to find out what would work best for your product is to test this assumption, in a controlled A/B test.

You can test your product title length as well as changing some of your keywords.

Tip: Don't forget that you can change the keywords in your listing or in the search terms field in the back-end of Seller Central anytime. You do not need to run a split test to see the effect this has on your rank, as you can see this on your product listing page.

Conclusion

There is no right or wrong answer when it comes to product listing optimization. What it comes down to is creating hypothesis and continuously testing to get data-driven results.

Once you have got past the initial stage of getting your listing out there, it's never too soon to start running split tests. If you don't have the time to do the manual work of changing your listings every day at midnight and crunching the numbers to find statistical significance, you can use Splitly to automate your Amazon listing optimization.

Splitly has three main features:

- Automated split testing for all elements of your Amazon listing.

- Rank tracking – A bonus feature so you can keep on top of your product rankings.

- Profit Peak – An automated repricer for private label sellers that uses artifical intelligence to consistently find the best known price for your product. This enables you to gain optimum profits on auto-pilot, and stay ahead of market trends.

Don't forget, if you want to try Splitly today, use the code ‘Million' for 25% off your first month!

What's Next?

Next week we have an exclusive “Ask Me Anything” Session with Greg Mercer. He is going to review any burning questions you have relating to any of the previous Million Dollar Case Study sessions. So if you've hit roadblocks, are experiencing challenges or you just want to join in the dialogue, make sure you are registered!